One can be easily impressed with large-scale projections, single-touch screens, multi-touch mobiles (think iPhone), interactive tabletops and the like. However, while such screens allow for "virtual" buttons and keyboards to be popped-up, panned and rotated anywhere, they lack obvious low-attention and vision-free tactile qualities. Instead, they require continuous visual attention to know *where* to press or click.

So is it possible to merge these new display technologies with physical buttons that dynamically appear and disappear, depending on the actual interface that is shown on the screen? Chris Harrison, a Ph.D. student in the at , recently proposed it can be done in the research project titled "" [chrisharrison.net]. (One might note Chris is not unknown on this blog. He is also the author behind data visualization projects like Digg Rings, Amazon Book Map, Google Trigrams, Visualizing the Bible and )

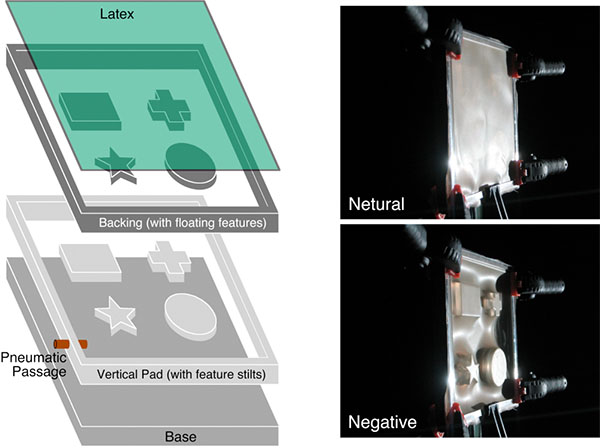

The outcome of his investigation is a working visual display that contains specific deformable areas, able to produce physical buttons and other interface elements. These tactile features can be dynamically brought "into" and "out of" the interface, and otherwise manipulated under program control. The surfaces provide for the full dynamics of a visual display (through rear projection) as well as allowing for multi-touch input (though an infrared lighting and camera setup behind the display).

See this innovative display in action in the video below.

Designed and Maintained by

Designed and Maintained by  Time and Date follows Time Zone (Brussels)

Time and Date follows Time Zone (Brussels)

This is just awesome ... Chris Harrison is my new hero ;)

Would be great to see the actual shapes change dynamically as well - so the designer will be able to specify what shapes will be formed on the latex and where, rather than the static pre-defined shapes in these prototypes. An exciting development in UI design though :-)